Everyone talks about it, everyone wants to work with it: machine learning . However, entry into the artificial intelligence (AI) sector poses major problems for companies – there is a lack of know-how and personnel. With these tools and frameworks succeed the first steps.

A woman in Japan is suffering from blood cancer. For several months, she is treated in a hospital with two medications that are considered reliable in the industry. But the recovery does not happen. The condition of the woman is deteriorating, the doctors are at a loss. They then enter Watson’s genetic data, which had changed during treatment. IBM’s artificial intelligence compares the data with those of 20 million other patients within minutes.

The result: The woman from Japan suffers from a particularly rare form of blood cancer. A substitute drug proposed by IBM Watson eventually strikes. A short time later, the woman can leave the hospital. Even if the example is still one of the few isolated cases in the business world: many companies are now dreaming of such or similar scenarios as in Japan when it comes to developing their own applications around artificial intelligence and machine learning.

After all, an investment can translate into valuable time and cost savings. However, the rapid spread of such technologies poses two serious problems for companies: There is a lack of know-how about data acquisition from ongoing operations on the one hand and qualified developers on the other. It is all the more important to familiarize yourself with the basics of the topic. On the following pages we show how this works.

What is Machine Learning?

At the beginning is some reading work, which is best spent with appropriate books or in online tutorials . An absolute classic is now the online course, by Andrew Ng3, one of the most famous professors in the field of machine learning at Stanford University in Silicon Valley. The course also makes it possible to get in touch with state-of-the-art technologies outside of an elite university.

This leads to another important insight: The field of machine learning is very academic. If you want to understand the theories behind these algorithms in depth, you have to invest a lot of time. This often only succeeds through a lengthy study. Only then will you be able to understand and optimize these algorithms. However, that is no reason to give up. Numerous frameworks also offer newcomers the opportunity to understand machine learning through the practice of pre-trained models.

There are several forms of machine learning: supervised, semi-supervised, unsupervised and reinforcement learning. If an algorithm is supervised, it knows, for example by a label, that a specific data point belongs to a certain class or has a specific target value. An example would be artificial neural networks or decision trees. On the other hand, if the algorithm is unsupervised, it must find out for itself what the target value or the class of the data point is. However, most widespread is supervised learning. That’s why many tutorials deal primarily with what really appeals to newcomers.

Supervised learning

In the case of supervised learning, the data point receives a label in addition to its representation as a vector (the so-called “feature vector”). If you use a classifier (data points are assigned to classes), the label is the class name to which this data point belongs. Using this class, the algorithm then attempts to correctly separate the data points during training. Besides the classification regression also exists. There are no classes here. The label in this case is a numeric value – the target value for this data point. For example, if you want to build a model that estimates the monthly rent of an apartment based on various factors (number of bathrooms, number of bedrooms, square footage), these factors can be called features and the estimated rent as a label (or target).

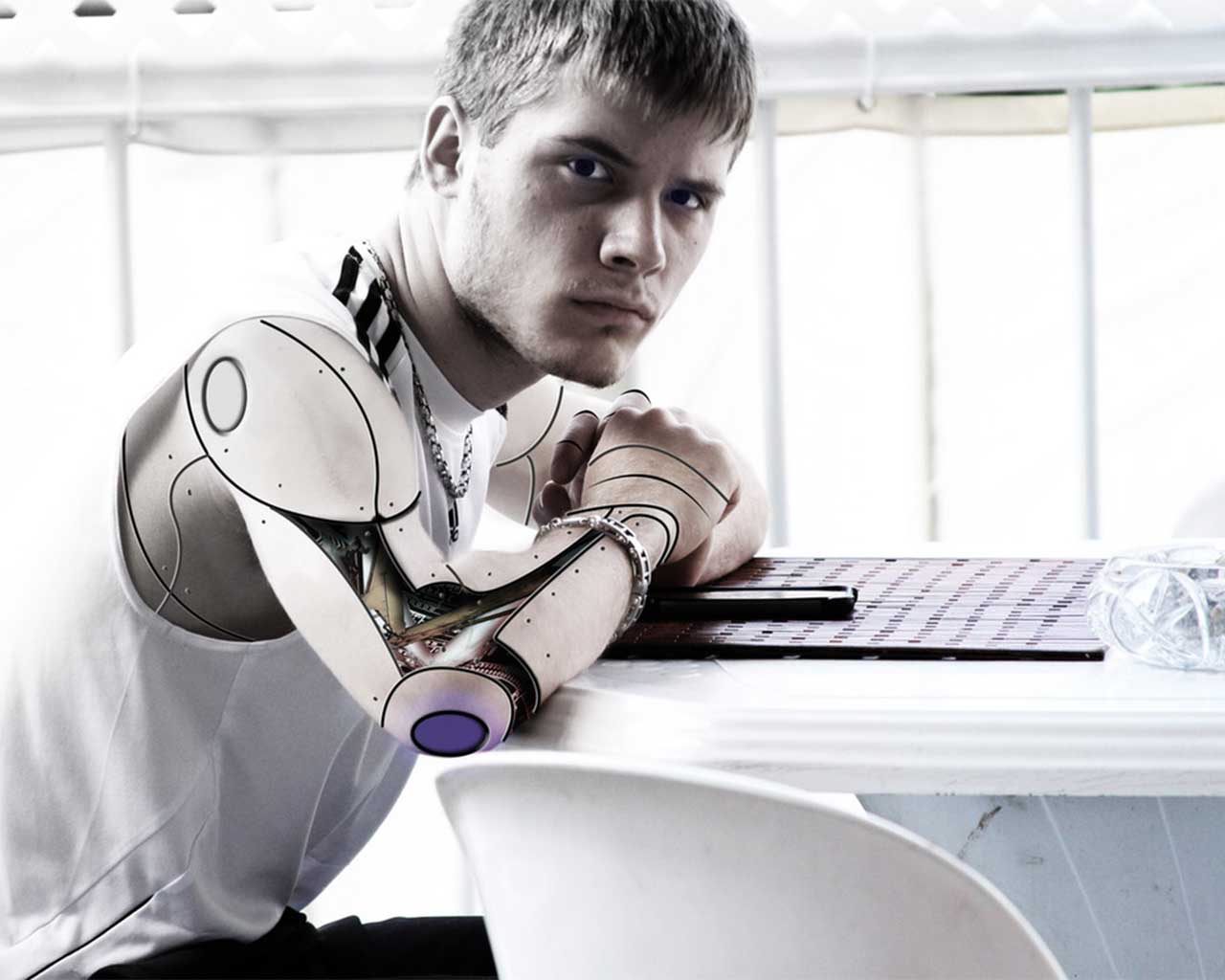

Machine Learning can solve many problems and assist in making important decisions. If the machine includes human input in its decision, it is called Human-in-the-Loop (HITL). As mentioned earlier, Watson can help IBM, for example, to make the right diagnosis in the event of illness. In turn, algorithms in autonomous vehicles will ensure that people reach their destination on the basis of machine-learning algorithms.

For a start, however, you should turn to simpler projects that you can solve in a short time and thus implement your own application or startup idea. There one is usually faced with pure data processing problems such as image recognition or the automatic processing of text. Most of these solutions already have solutions to Stackoverflow and Github.

Search for suitable data

However, any good statistic needs a representative set of samples to make it meaningful. This means that you need a large amount of data points to make the trained models smart and make the right decisions.

But where does this data come from? There are various portals that offer free data sets. At Kaggle , machine teachers compete against each other by testing their algorithms on free datasets. In the UCI Machine Learning Repository you will find 438 data sets on various topics, from the classification of buzz in social media channels to a regression of Facebook metrics. Another data repository is Datahub . Founders Adam Kariv and Rufus Pollock have fulfilled their dream of an open exchange place for data. The administrations of states also have a lot of data that they want to provide. These include areas such as agriculture, climate, education and many more. An example of this procedure can be found for the USA on the platform data.gov.

If a record has been selected to train the application, you can start immediately. The next thing to do is to decide what to do with this data. Is a classification worthwhile for this data set? The question can be answered “Yes” if the dataset has a class label. These are mostly alphanumeric identifiers (for example “spam” and “nospam” in an antispam database). Or is more a regression useful here? The answer is positive if the dataset has a numerical target value (for example, the price of a stock forecast).

Also recommended is KNIME (Konstanz Information Miner) – a graphical tool for data analysis. With KNIME you can quickly and easily visualize your data, evaluate it and train models on it. The software offers the most common charts and graphs used in statistics. After watching the dataset, you can easily connect the components for a machine-learning application with “drag and drop” and press play.

Since graphical tools are particularly well-suited for getting started and visualizing the work steps, it is advisable to sketch the workflow at least once, namely the training of a machine-learning model and then the testing or evaluation of the model. An alternative to KNIME is the Rapidminer program.

Read about choosing the right framework on this article